Twelve months ago, every enterprise budget meeting included the same question: “What are we doing about AI?” In 2026, the question has shifted. It is no longer about whether to invest, but where the money should actually go. And the answer, for most organisations I work with or observe, is not what the headlines would have you believe.

The reality of AI investment in 2026 is quieter, more practical, and far more infrastructure-heavy than the chatbot-dominated narrative suggests. This article is the first in a ten-part series exploring how AI and IT are genuinely intersecting right now — not in press releases, but in real teams, real budgets, and real engineering decisions.

AI & IT in 2026 — Full Series

- 1. Where Businesses Are Actually Investing in AI in 2026

- 2. How AI Is Reshaping the Developer’s Daily Workflow

- 3. Platform Engineering in the Age of AI

- 4. The Security Risks Businesses Aren’t Talking About

- 5. The Hidden Risks of Embedding AI Into Your Workflows

- 6. AI and the IT Job Market: What’s Really Happening

- 7. What Happens to Engineers Who Refuse to Use AI

- 8. Is AI a Paradigm Shift? Lessons from Cloud and Virtualisation

- 9. The AI-Augmented IT Team: What 2027 Looks Like

- 10. Your Move: A Practical Framework for IT Professionals

TL;DR — AI Investment at a Glance

- Where the money goes — Developer tools, security, and ops automation dominate real AI spend, not consumer chatbots

- Infrastructure vs products — The smartest organisations are investing in AI infrastructure that compounds, not flashy AI features

- Curious vs committed — The gap between organisations experimenting with AI and those embedding it is widening fast

- Budget implications — IT teams planning 2026-2027 budgets need to treat AI tooling as core infrastructure, not R&D

The AI Budget Conversation Has Shifted

In early 2025, most enterprise AI conversations were about possibility. Could AI write our marketing copy? Could it handle customer support? Could it summarise meetings? These are valid questions, and some organisations found genuinely useful answers. But the pattern I have seen across dozens of businesses in 2026 is different. The conversation has matured from “what can AI do?” to “what should we actually pay for?”

That shift matters enormously. When AI was a novelty, budgets were discretionary — experimental pots of money allocated to innovation teams or skunkworks projects. Now, AI spend is appearing in core operational budgets. It is sitting alongside monitoring tools, CI/CD platforms, and cloud infrastructure costs. This is not a sign that AI has become boring. It is a sign that it has become essential.

The most telling indicator is where finance teams are categorising AI spend. In 2025, it was typically under “R&D” or “Innovation.” In 2026, it is increasingly under “Engineering Tools” or “Operational Technology.” That reclassification tells you everything about where the real value is landing.

What surprised me most is how little of the actual spend goes toward the consumer-facing AI products that dominate tech media. The flashy chatbot on your website? That is a rounding error compared to what engineering teams are spending on AI-assisted development, automated security scanning, and intelligent operations tooling.

Where the Money Is Actually Going

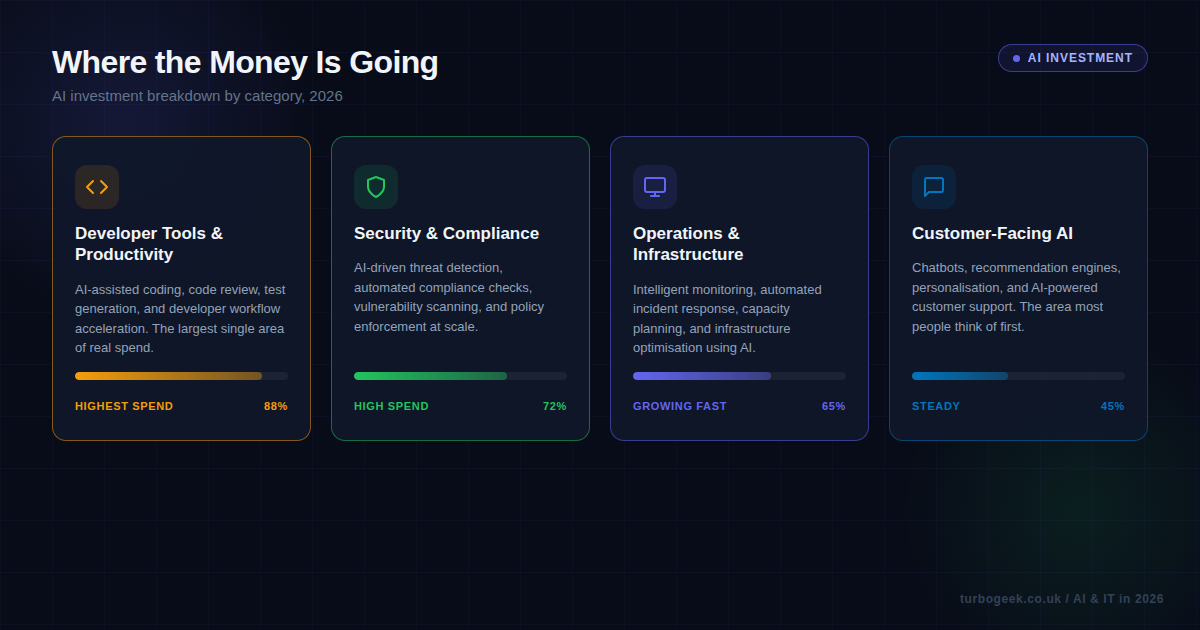

When you strip away the marketing noise and look at actual procurement data, four investment areas dominate AI budgets in 2026. None of them involve a chatbot greeting your customers.

Developer tools and productivity accounts for the largest single area of spend. This includes AI-assisted coding tools like GitHub Copilot, Cursor, and Claude Code; automated code review platforms; test generation systems; and workflow acceleration tools that reduce the time between writing code and shipping it. The appeal is straightforward — these tools show measurable productivity gains within weeks, and the ROI calculation is simple enough for any CFO to understand.

Security and compliance is the second major area. AI-driven threat detection, automated vulnerability scanning, and policy enforcement tools are seeing significant adoption. Compliance teams, historically slow to adopt new technology, are embracing AI tools that can scan codebases for regulatory issues faster than any human audit. The driver here is not innovation — it is risk reduction, which is an easier sell in any boardroom.

Operations and infrastructure comes third but is growing fastest. Intelligent monitoring that can predict incidents before they happen, automated incident response playbooks, and capacity planning tools that optimise cloud spend are all seeing rapid adoption. AIOps is no longer a buzzword — it is a budget line item.

Customer-facing AI — the chatbots, recommendation engines, and personalisation tools that most people think of when they hear “AI” — is actually the smallest of the four categories in terms of new investment. That is not because these tools are not valuable. It is because many organisations already invested in this area during the 2023-2024 wave and are now in maintenance mode rather than expansion mode.

AI Infrastructure vs AI Products: The Real Divide

There is a distinction that I think gets lost in most AI investment discussions, and it is the single most important factor separating organisations that will benefit from AI long-term from those that will not. The distinction is between investing in AI products and investing in AI infrastructure.

An AI product is something you buy off the shelf and plug in. A customer support chatbot. A content generation tool. A meeting summariser. These products deliver value, sometimes significant value, but they are commodities. When everyone has access to the same chatbot API, the competitive advantage evaporates quickly.

AI infrastructure is different. It is the foundation that allows an organisation to build, customise, and improve AI capabilities over time. This includes internal model fine-tuning pipelines, proprietary training data management, AI-aware CI/CD pipelines, prompt engineering frameworks, and the governance structures that ensure AI tools are used safely and effectively.

The organisations I see making the smartest bets in 2026 are not asking “which AI product should we buy?” They are asking “how do we build the capability to evaluate, integrate, and improve AI tools continuously?” That is an infrastructure question, not a product question.

Consider the difference between two approaches to AI-assisted code review. Organisation A buys an AI code review tool and connects it to their repository. Organisation B invests in building a code review pipeline that can incorporate any AI model, trained on their own codebase patterns and coding standards, with feedback loops that improve accuracy over time. Organisation A gets value on day one. Organisation B gets compounding value over years.

This infrastructure-first approach requires more upfront investment, more engineering time, and more patience. It also requires leadership that understands the difference between buying a product and building a capability. Not every organisation needs to go deep on AI infrastructure — but every organisation should understand where they sit on this spectrum and why.

AI-Curious vs AI-Committed: Two Very Different Organisations

Through conversations with IT leaders, engineering managers, and infrastructure teams across multiple sectors, I have started to see a clear pattern. Organisations are splitting into two distinct camps, and the gap between them is widening faster than most people realise.

AI-Curious organisations are running pilot projects. They have a few teams experimenting with AI coding assistants, maybe a proof-of-concept chatbot, possibly some exploratory work with AI-driven analytics. The experiments are genuine, but they share common traits: no dedicated AI budget (the spend is hidden in existing line items), no clear success metrics, and adoption driven primarily by executive enthusiasm rather than engineering demand.

These organisations tend to think tool-first. Someone in leadership reads about a new AI product, and the instruction comes down: “we should be using this.” The problem to solve is identified afterwards, if at all. Training is minimal — the assumption is that AI tools are intuitive enough that existing staff will simply adopt them. When adoption stalls, the conclusion is often that AI “is not ready yet” rather than that the implementation approach was flawed.

AI-Committed organisations look fundamentally different. AI is embedded in their workflows, not bolted on. It appears in their CI/CD pipelines, their monitoring dashboards, and their daily development processes. Crucially, they have ring-fenced AI budgets with clear ROI tracking. They know what they are spending, and they know what they are getting for it.

The committed camp takes a problem-first approach. They identify points of friction — slow code reviews, missed security vulnerabilities, manual incident response — and then evaluate whether AI can reduce that friction. They invest in training, treating prompt engineering and AI tool proficiency as core competencies rather than nice-to-haves. And critically, adoption is engineering-led with executive support, rather than executive-led with engineering compliance.

The uncomfortable truth is that the gap between these two groups is compounding. AI-Committed organisations are building capabilities that AI-Curious organisations will struggle to replicate in eighteen months, because the value is not just in the tools — it is in the institutional knowledge, the refined workflows, and the cultural comfort with AI-augmented processes.

What This Means for IT Teams Planning Budgets

If you are an IT leader, engineering manager, or infrastructure architect planning your 2026-2027 budget, the investment landscape above suggests several practical takeaways.

Reclassify AI spend as operational, not experimental. If your AI tooling budget still sits under “Innovation” or “R&D,” you are sending the wrong signal to your organisation. AI-assisted development tools, security scanning, and ops automation are operational costs. They should be budgeted, tracked, and evaluated like any other core engineering tool. This reclassification also protects the budget from being cut when the next shiny priority appears.

Invest in evaluation capability, not just tools. The AI tooling market is moving extraordinarily fast. The best coding assistant today may not be the best one in six months. Rather than committing entirely to a single vendor, invest in the ability to evaluate, switch, and integrate tools quickly. Build abstractions. Keep your options open. The organisations winning here are the ones that can adopt a new AI tool in days, not months.

Budget for training, not just licences. Every AI tool licence you buy without a corresponding training investment is money partially wasted. The difference between a developer who uses an AI coding assistant effectively and one who does not is enormous — easily a 3-5x difference in productivity gain. Structured training, internal knowledge sharing, and prompt engineering workshops should be line items alongside the tool subscriptions.

Measure what matters. Avoid vanity metrics like “number of AI-generated lines of code” or “AI tool adoption rate.” Instead, measure outcomes: time to merge, defect escape rate, mean time to detect and respond to incidents, and developer satisfaction scores. These are the metrics that tell you whether your AI investment is actually working, and they are the metrics that will justify continued or increased spend.

Plan for AI infrastructure costs to grow. If you are serious about AI adoption, your compute costs will increase. AI-assisted CI/CD, model inference for security scanning, and intelligent monitoring all consume resources. Factor this into your cloud budget forecasts now, rather than discovering a 20% overspend in Q3.

The Investment Signal You Should Be Watching

If there is one signal to watch as 2026 progresses, it is this: pay attention to where engineering teams are asking for AI tooling, not where executives are mandating it. Bottom-up demand is the most reliable indicator of genuine value. When developers ask for better AI coding assistants, when security engineers request AI-driven scanning tools, when SREs want intelligent monitoring — that is where the real ROI lives.

Top-down AI mandates produce compliance. Bottom-up AI adoption produces capability. The organisations that understand this distinction are the ones making the smartest investments in 2026, and they are the ones that will be best positioned when the next wave of AI capability arrives.

The AI investment landscape is not about betting on the future. It is about reading the present clearly and acting on what the data actually shows. Right now, the data shows that the most impactful AI spend is boring, operational, and deeply embedded in engineering workflows. That is not a failure of imagination. That is a sign of maturity.

Next in this series: How AI Is Reshaping the Developer’s Daily Workflow — a closer look at how AI coding tools are changing what developers actually do all day, and what that means for engineering culture.

Leave a Reply