TL;DR

- The threat is real now — adversaries are harvesting encrypted data today to decrypt it when quantum computers arrive.

- NIST standards are final — ML-KEM, ML-DSA, and SLH-DSA were published in August 2024. No more waiting for “final” specs.

- CNSA 2.0 deadline: January 2027 — US National Security Systems must begin transitioning. This sets the pace for everyone else.

- Start with crypto-agility — abstract your cryptographic operations so you can swap algorithms without rewriting applications.

- Hybrid certificates bridge the gap — deploy classical + PQC side by side during the transition period.

If you work in IT security, infrastructure, or cloud engineering, post-quantum cryptography (PQC) has probably been on your radar for a while. The difference in 2026 is that we have moved from “interesting research topic” to “you need a migration plan.” NIST has finalised its first post-quantum standards. The US government has set a hard compliance deadline. AWS, Google, and Microsoft have all published their migration strategies. The question is no longer whether to migrate but how fast you can do it.

This guide cuts through the academic noise and gives you a practical, enterprise-focused path to PQC readiness. No PhD in lattice mathematics required.

Why Post-Quantum Cryptography Matters Right Now

The cryptographic algorithms that protect virtually all internet traffic today — RSA, ECDSA, ECDH, Diffie-Hellman — rely on mathematical problems that classical computers cannot solve efficiently. Factoring large numbers, computing discrete logarithms — these are hard for conventional hardware. They are not hard for quantum computers running Shor’s algorithm.

The critical nuance that catches people off guard is harvest now, decrypt later (HNDL). Nation-state adversaries and sophisticated threat actors are already intercepting and storing encrypted traffic. They cannot read it today. But when a cryptographically relevant quantum computer (CRQC) arrives — estimated somewhere between 2030 and 2040 — they will be able to decrypt that stored data retroactively.

If your organisation handles data that needs to remain confidential for 10 or more years — health records, financial data, government communications, intellectual property — the HNDL threat means you are already behind. The data being harvested today could be readable within a decade.

The NIST Standards: What Was Actually Published

In August 2024, NIST published three finalised post-quantum cryptographic standards after an eight-year evaluation process. These are not drafts or candidates — they are the official standards that vendors and governments are now building against.

| Standard | Based On | Purpose | Key Sizes |

|---|---|---|---|

| ML-KEM (FIPS 203) | CRYSTALS-Kyber | Key encapsulation (replacing RSA/ECDH key exchange) | 800 / 1,184 bytes (public key) |

| ML-DSA (FIPS 204) | CRYSTALS-Dilithium | Digital signatures (replacing RSA/ECDSA signatures) | 1,312 / 1,952 bytes (public key) |

| SLH-DSA (FIPS 205) | SPHINCS+ | Stateless hash-based signatures (conservative fallback) | 32 / 64 bytes (public key) |

The first thing you will notice is the key sizes. ML-KEM and ML-DSA public keys are significantly larger than their classical equivalents. An RSA-2048 public key is 256 bytes. An ML-KEM-768 public key is 1,184 bytes. This has real implications for TLS handshakes, certificate chains, and network overhead — which is exactly why testing matters.

NIST has also selected a fourth algorithm, FN-DSA (based on FALCON), for a future standard expected in late 2025 or 2026. FN-DSA offers smaller signatures than ML-DSA, making it attractive for bandwidth-constrained environments.

CNSA 2.0: The Deadline That Sets the Pace

The NSA’s Commercial National Security Algorithm Suite 2.0 (CNSA 2.0) is the compliance framework that gives PQC migration its urgency. It applies directly to US National Security Systems (NSS), but its influence extends much further — government contractors, defence supply chains, and any organisation that handles classified or sensitive government data.

The key dates from CNSA 2.0:

- By 2025: Begin incorporating PQC into new systems and products.

- By January 2027: Software and firmware signing must use PQC algorithms. Web servers and cloud services handling NSS data must support PQC.

- By 2030: All legacy cryptographic systems must be fully migrated to PQC.

- By 2033: Complete deprecation of all pre-quantum algorithms in NSS environments.

Even if your organisation is not directly subject to CNSA 2.0, these deadlines matter. They drive vendor roadmaps, cloud provider timelines, and industry best practices. When AWS, Azure, and Google Cloud begin defaulting to PQC algorithms, the rest of the market follows.

What AWS, Google, and Microsoft Are Doing

The major cloud providers are not waiting. Their published migration plans give a useful window into where the industry is heading.

AWS published its PQC migration roadmap in 2025. AWS Key Management Service (KMS) already supports ML-KEM for key encapsulation. AWS Certificate Manager is adding hybrid certificate support, and s2n-tls (AWS’s TLS library) supports post-quantum key exchange in production. AWS recommends customers begin with a cryptographic inventory and prioritise TLS endpoints and long-lived data encryption keys.

Google has been running PQC experiments since 2016, when it trialled CECPQ1 in Chrome. Google’s internal target is full PQC migration across all services by 2029. Chrome already supports ML-KEM in TLS 1.3 key exchange (enabled by default since Chrome 124). Google Cloud KMS is adding PQC key types, and Cloud HSM will follow.

Microsoft is integrating PQC into Windows, Azure, and Microsoft 365. SymCrypt, Microsoft’s core cryptographic library, added ML-KEM and ML-DSA support in late 2024. Azure Key Vault and Azure Managed HSM are on track for PQC support in 2026. Microsoft has also published a PQC readiness assessment tool for enterprise customers.

The Practical Migration Path

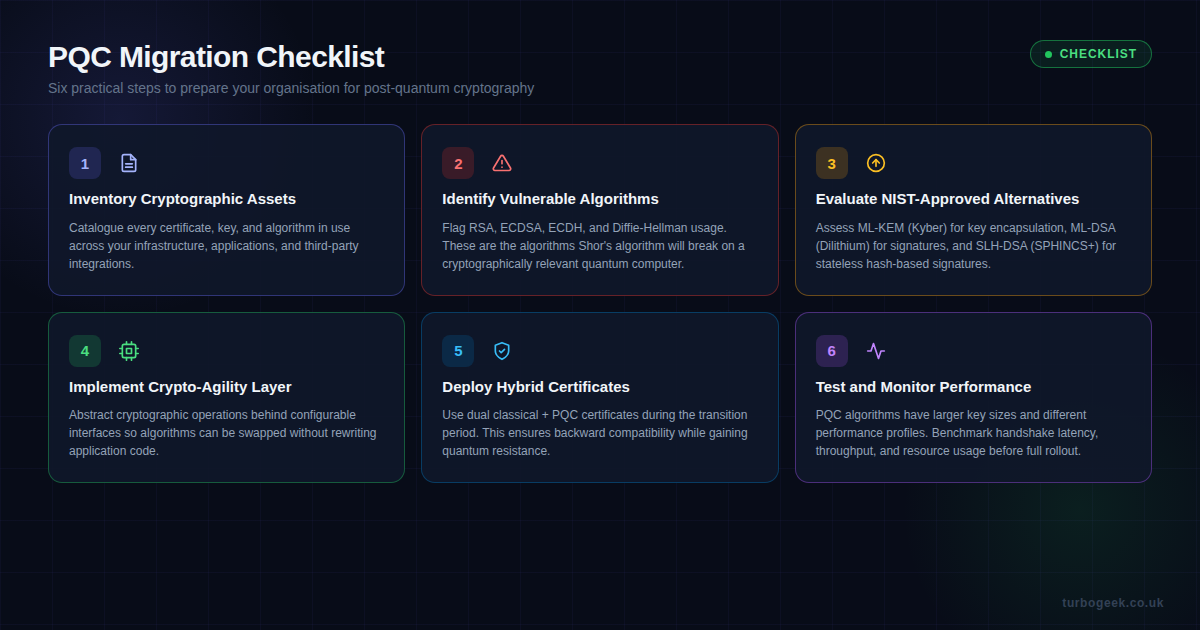

Theory is useful. A plan you can actually execute is better. Here is the six-step approach that maps to real-world enterprise environments.

Step 1: Inventory Your Cryptographic Assets

You cannot migrate what you cannot find. Most organisations are surprised by how many places cryptography is embedded — TLS certificates, database encryption keys, API authentication tokens, code signing certificates, VPN tunnels, SSH keys, encrypted backups, and hardware security modules.

Tools like AWS Cryptographic Migration Readiness Tool, IBM’s z/OS Crypto Discovery, or open-source options like cryptosense can automate this discovery. The goal is a complete inventory with algorithm type, key length, and data sensitivity classification for each asset.

Step 2: Identify Quantum-Vulnerable Algorithms

From your inventory, flag everything using RSA, ECDSA, ECDH, DSA, or classical Diffie-Hellman. These are the algorithms that Shor’s algorithm targets. Symmetric algorithms (AES-256) and hash functions (SHA-256 and above) are considered quantum-safe with their current key sizes, though Grover’s algorithm does halve their effective security — which is why AES-256 (not AES-128) is the CNSA 2.0 requirement.

Prioritise by data sensitivity and lifespan. Data that must remain confidential for 15+ years needs PQC protection now, not in 2030.

Step 3: Evaluate NIST-Approved Alternatives

Map your vulnerable algorithms to their PQC replacements:

- RSA/ECDH key exchange — replace with ML-KEM (FIPS 203). This is the primary algorithm for TLS key establishment and key encapsulation.

- RSA/ECDSA signatures — replace with ML-DSA (FIPS 204). Use for code signing, certificate signatures, and document authentication.

- Conservative fallback — SLH-DSA (FIPS 205) offers hash-based signatures with well-understood security assumptions. Slower but mathematically simpler.

Step 4: Implement Crypto-Agility

Crypto-agility is the single most important architectural concept in this migration. It means designing your systems so that cryptographic algorithms can be changed via configuration rather than code changes.

In practice, this means:

- Abstracting cryptographic operations behind well-defined interfaces or service layers.

- Storing algorithm identifiers alongside encrypted data so the system knows which algorithm was used.

- Using protocol negotiation (as TLS already does) rather than hardcoding cipher suites.

- Ensuring your key management infrastructure can handle multiple algorithm types simultaneously.

Even if you do nothing else today, implementing crypto-agility dramatically reduces the cost and risk of future algorithm transitions — whether for PQC or any other cryptographic evolution.

Step 5: Deploy Hybrid Certificates

A hybrid certificate contains both a classical signature (e.g. ECDSA) and a PQC signature (e.g. ML-DSA). Clients that understand PQC verify the quantum-safe signature. Clients that do not fall back to the classical one. This approach provides backward compatibility while beginning the transition.

Chrome, Firefox, and most modern TLS libraries already support hybrid key exchange using ML-KEM + X25519. Certificate authorities including DigiCert and Entrust are issuing hybrid certificates. AWS Certificate Manager and Google Cloud Certificate Authority Service both have hybrid support on their roadmaps.

Step 6: Test and Monitor Performance

PQC algorithms behave differently from classical ones. ML-KEM is actually faster than RSA for key encapsulation, but ML-DSA signatures are larger, which increases TLS handshake sizes. In our testing, TLS handshakes with hybrid ML-KEM + X25519 added roughly 0.5 ms of latency — negligible for most applications but worth measuring in latency-sensitive environments.

Key performance considerations:

- Bandwidth: PQC certificates and handshakes are larger. Monitor network overhead, especially on mobile connections.

- Latency: Benchmark TLS handshake times with hybrid and pure PQC configurations.

- Memory: Larger key sizes mean higher memory usage in key stores and HSMs.

- Compatibility: Test against all clients and intermediaries (load balancers, CDNs, proxies) in your environment.

A Note on Timelines: When Do You Actually Need to Act?

The honest answer depends on your data sensitivity. If you handle government-classified data, you are already under CNSA 2.0 pressure and should be in active migration. If you handle healthcare, financial, or IP-heavy data with long confidentiality requirements, starting in 2026 is prudent. If you are a typical SaaS company with mostly transient data, you have more runway — but crypto-agility should still be on your 2026 roadmap.

The one thing everyone should do today: stop deploying new systems with hardcoded cryptographic assumptions. Every new application, service, or infrastructure component you build from now on should be crypto-agile by design.

Try post-quantum crypto on your own box today

You do not need to wait for the migration deadline to get hands-on. OpenSSH 9.9+ and OpenSSL 3.5+ both ship with usable post-quantum primitives. The two blocks below are the smallest experiments that show real PQ negotiation happening on a real machine.

SSH: turn on hybrid ML-KEM key exchange

TLS: ML-KEM key encapsulation with OpenSSL

Cloudflare Research keeps a public test endpoint that returns a green badge if your TLS handshake used a hybrid PQ group. It is the fastest way to confirm a browser, runtime or load balancer is genuinely doing post-quantum negotiation rather than just reporting it as configured.

Getting Started This Week

If this article has convinced you that PQC migration is worth your attention, here is what you can do in the next five working days:

- Run a cryptographic inventory on your most critical system. Even a manual scan of your TLS certificates and KMS keys gives you a starting point.

- Check your cloud provider’s PQC documentation. AWS, Azure, and GCP all have published migration guides specific to their services.

- Test hybrid TLS. If you run nginx or Apache, try configuring ML-KEM + X25519 hybrid key exchange in a staging environment. OpenSSL 3.5 (expected mid-2026) will include full PQC support.

- Read the NIST standards. FIPS 203, 204, and 205 are freely available. The implementation sections are more accessible than you might expect.

- Brief your leadership. PQC migration is a multi-year programme with budget implications. Starting the conversation now avoids last-minute scrambling.

The quantum threat to cryptography is not science fiction. The standards exist. The deadlines are set. The cloud providers are moving. The only variable is whether your organisation moves proactively or reactively — and in security, reactive is always more expensive.

Leave a Reply