The Middle East is spending more on AI per capita than almost anywhere else on earth. The UAE, Saudi Arabia, and Israel are using it to reshape surveillance, defense, and political influence — and the technology is changing the region’s power dynamics faster than any foreign policy update.

This isn’t future speculation. The infrastructure is being built right now, the systems are operational, and the geopolitical consequences are already playing out. I find this genuinely fascinating and more than a little unsettling — because what gets tested and normalized here tends to get exported globally.

TL;DR

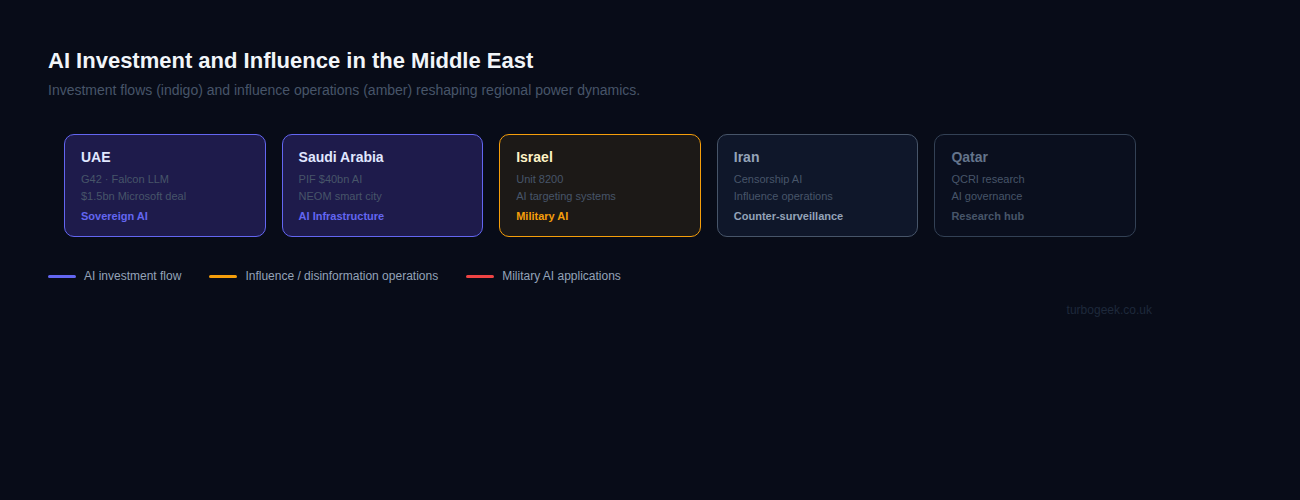

- AI Investment Race: Saudi Arabia has committed $40bn via its Public Investment Fund; UAE’s G42 signed a $1.5bn Microsoft cloud deal and built Falcon LLM, a sovereign Arabic language model.

- Surveillance at Scale: Gulf states are deploying facial recognition and AI monitoring across smart cities — NEOM is designed ground-up with AI surveillance baked in.

- Influence Operations: AI has dramatically cut the cost of political disinformation. Iran has been linked to AI-generated campaigns targeting Israeli public opinion; Gulf states have used AI content farms to shape regional narratives.

- Military AI: Israel’s Lavender system (reported by +972 Magazine) used AI to generate targeting recommendations in Gaza — raising urgent questions about accountability and human oversight in lethal decision-making.

- Global Template: What gets normalised in the Middle East — AI targeting, surveillance exports, sovereign LLMs — is likely to become the global default.

| Country | AI Investment Focus | Notable Programme |

|---|---|---|

| UAE | National AI strategy, LLMs | G42, Falcon LLM, Nvidia deal, $1.5bn Microsoft deal |

| Saudi Arabia | NEOM, smart city AI | $40bn Public Investment Fund AI commitment |

| Israel | Military AI, surveillance | Unit 8200, AI targeting systems (Lavender) |

| Iran | Domestic AI, counter-surveillance | Censorship AI, influence operations |

| Qatar | AI governance, finance | QCRI research, smart city projects |

New to this topic? Start with The AI Investment Race in the Gulf — it establishes the scale before the harder questions.

The AI Investment Race in the Gulf

Let’s start with the money, because the scale of it is extraordinary.

Saudi Arabia’s Public Investment Fund has committed $40 billion to AI infrastructure. That’s not a rounding error in a government budget — it’s a deliberate strategic bet on becoming an AI superpower. The ambition behind NEOM, Saudi Arabia’s planned city in the northwest of the country, is that it will be the first major urban environment designed from scratch with AI monitoring and management integrated at every layer. Smart city is probably an understatement.

The UAE is, if anything, more advanced in execution. G42 — headquartered in Abu Dhabi — is one of the world’s largest private AI companies, and it signed a $1.5 billion cloud computing deal with Microsoft in 2023. More significantly, the UAE developed Falcon LLM: a large language model trained on Arabic as a first-class language. This isn’t a bolt-on translation layer. It’s a sovereign AI stack, built to reduce dependence on US or Chinese technology providers.

That last point matters more than it might initially appear. These countries are not just buying AI — they’re building it, on their own terms, with their own data and their own languages. The phrase “AI sovereignty” is being used by Gulf state officials in the same way Western politicians use “energy security.” The strategic logic is identical.

Whether that sovereignty is ultimately achievable — given the dependence on Nvidia hardware, US cloud hyperscalers, and Western-trained researchers — is a legitimate question. But the intent is clear, and the investment is real.

Surveillance, Facial Recognition, and State Control

AI surveillance is being deployed across the Gulf at a scale that’s genuinely hard to grasp from the outside. NEOM isn’t just a smart city — it’s designed to monitor everything that moves within it. Facial recognition in public spaces is operational in the UAE. The question isn’t whether the infrastructure exists; it’s what it’s being used for.

Governments in the region argue, not unreasonably, that AI surveillance reduces crime, improves traffic management, and enables faster emergency response. These are legitimate uses, and they’re real. I’m not dismissing them.

But there’s a documented parallel track. Human rights organizations, including Amnesty International and Human Rights Watch, have reported cases of activists, journalists, and political opponents in Gulf states being tracked, arrested, and prosecuted using digital surveillance evidence. The same infrastructure that monitors traffic can monitor dissent. That dual-use reality is not a theoretical risk — it has already materialised.

The design choice embedded in these systems is that the government decides what counts as a legitimate use. There’s no independent judicial oversight equivalent to what exists in European democracies, nor is there an equivalent of the GDPR that places meaningful limits on state collection of biometric data. The AI surveillance infrastructure is being built first, and the governance is being figured out — if at all — later.

AI-Powered Disinformation and Influence Operations

AI has dramatically lowered the cost of producing political disinformation at scale. What once required a significant content farm operation — hundreds of writers, translators, social media managers — can now be achieved with a handful of people and access to a large language model. The implications for a region with multiple ongoing political conflicts and intense information competition are significant.

Iran has been explicitly linked to AI-generated influence operations targeting Israeli public opinion. Researchers at Meta and cybersecurity firms have traced networks of AI-generated Arabic and Farsi content — fake news articles, synthetic social media accounts, coordinated amplification campaigns — to state-linked Iranian actors. The content is sophisticated enough to pass casual inspection and reaches millions of users across platforms that struggle to moderate non-English content at scale.

Gulf states have, by multiple accounts, used AI-assisted content operations to shape regional narratives — both domestically and internationally. This isn’t unique to the Middle East; every major power does something similar. But the combination of high AI investment, active regional conflicts, and multilingual populations creates a particularly fertile environment for AI-amplified information warfare.

Deepfakes are part of this picture, too. Synthetic video of political figures is easier to produce than ever, and harder for ordinary people to detect. The tools are available to anyone with modest technical capability and a political motive. In a region where trust in institutions is already fragile for many populations, AI-generated disinformation has an outsized destabilizing potential.

AI in Active Conflict Zones

The most significant and contested AI story in the region — possibly globally — is Israel’s use of AI systems in military targeting.

In April 2024, +972 Magazine and Local Call published an investigation into a system called Lavender. According to the investigation, based on testimony from Israeli intelligence officers, Lavender was used to generate targeting recommendations — lists of individuals identified by the AI as likely Hamas or Palestinian Islamic Jihad members — during the early phases of the conflict in Gaza. The investigation found that tens of thousands of individuals were added to these lists with limited human review, and that strikes were sometimes carried out against individuals at home with their families on the basis of these AI-generated recommendations.

Israel has disputed elements of this characterisation, and the full operational details remain classified. But Israeli military officials have acknowledged using AI tools to accelerate targeting processes. The underlying ethical question — regardless of the specific details of Lavender — is not going away.

When AI is used to generate targeting recommendations at speed and scale, who is accountable for errors? If the AI misclassifies a civilian as a combatant and a strike follows, where does responsibility lie — with the algorithm, the officer who approved the recommendation, the official who commissioned the system, or the engineers who built it? International humanitarian law requires meaningful human oversight of lethal force. Whether AI-assisted targeting at operational speed satisfies that requirement is a question international courts and legal scholars are actively grappling with.

My honest view: the speed-vs-accountability trade-off in AI military targeting is one of the most important ethical questions of our time, and it’s being resolved by operational practice in active conflict zones rather than by deliberate international agreement. That’s a problem.

What This Means for the Rest of the World

I’ve described the Middle East throughout this post as a testing ground — and I think that framing is accurate, though it risks making the region sound like a passive laboratory rather than a collection of active political actors with their own interests and strategies. It’s both.

The questions being resolved — implicitly, through operational use — in the Middle East are the questions every country will face:

- When is AI-assisted military targeting ethical and legal? There is no settled international consensus. Active conflicts are creating facts on the ground before the legal framework catches up.

- Who controls sovereign AI? Gulf state investments in domestic LLMs and AI infrastructure are partly about reducing geopolitical dependence. Every country with the means to do so will face the same pressure.

- How does the export of surveillance AI affect human rights globally? Technology developed and standardized in the Gulf states is licensed and exported. Facial recognition systems, social scoring infrastructure, and content monitoring tools travel.

- What happens to democratic accountability when AI accelerates decision-making? When targeting recommendations are generated in seconds and acted on in minutes, the traditional chain of accountability — from order to action to review — breaks down.

None of these questions has clean answers. But ignoring them — or treating the Middle East’s AI story as distant and irrelevant — would be a serious mistake. What becomes established as normal practice here will inform the norms, legal frameworks, and commercial products that the rest of the world inherits.

Frequently Asked Questions

How is AI being used in the Middle East?

AI is being deployed across surveillance (facial recognition, smart cities), military targeting, national AI infrastructure (UAE Falcon LLM, Saudi NEOM), and political influence operations. The region spans almost every major category of AI application, making it unusually significant as a case study.

Which Middle East countries are investing most in AI?

Saudi Arabia and the UAE are the largest investors by capital deployed. Saudi Arabia’s Public Investment Fund has committed over $40 billion to AI infrastructure. The UAE’s G42 is one of the world’s largest private AI companies, with a $1.5 billion Microsoft cloud deal and the Falcon LLM project to its name. Israel leads in military and intelligence AI applications, with a long-established signals intelligence ecosystem centered on Unit 8200.

Is AI used in warfare in the Middle East?

Yes. Israel has acknowledged using AI-assisted targeting systems in its military operations. The ethics and legality of AI in military targeting — particularly regarding human oversight of lethal decisions — are major ongoing international debates. The Lavender system, investigated by +972 Magazine, brought this question into sharp public focus in 2024. Other actors in the region are developing military AI capabilities as well, though with less publicly available detail.

The AI infrastructure powering all of this has its own environmental cost. This post on AI’s environmental impact has the numbers on data centers, energy use, and what the industry isn’t telling you.

Leave a Reply