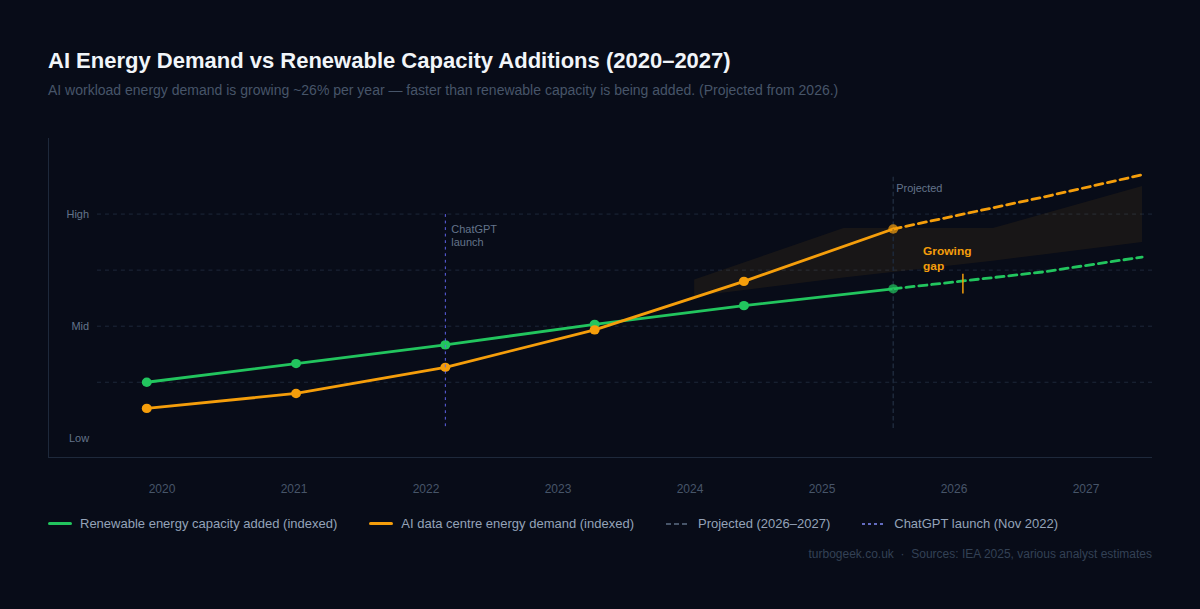

AI companies talk a lot about sustainability. Their data centres tell a different story. Energy consumption from AI workloads is growing faster than renewable capacity is being added. Here’s what the numbers actually say — and why the gap between what the industry promises and what it delivers keeps getting wider.

TL;DR

- The Scale: Data centres use 1–2% of global electricity; AI workloads growing at ~26% per year

- Training vs inference: Training one model uses 50+ GWh — but inference running 24/7 dwarfs that total

- Pledges vs reality: Microsoft carbon up 30% since 2020; Google missed its 2020 carbon-neutral target

- Water use: Microsoft’s data centres used 1.7 billion gallons of water in 2023 — up 34% year-on-year

- The response: Nuclear restarts (Three Mile Island), green energy deals — real investment but lagging demand

| Metric | Figure | Source |

|---|---|---|

| Global data centre electricity use | ~1–2% of global total | IEA 2025 |

| AI workload energy growth (CAGR) | ~26% | IEA 2025 |

| Microsoft data centre water use | 1.7bn gallons/year (2023) | Microsoft ESG Report |

| Google carbon-neutral claim vs actual | Net zero by 2030 (behind target) | Google 2024 ESG |

| GPT-4 training energy estimate | ~50 GWh | Various estimates |

New to this topic? Start with The Scale of the Problem below — the numbers put everything else in context.

The Scale of the Problem

Let’s start with the baseline. According to the IEA’s 2025 report, data centres currently consume somewhere between 1% and 2% of global electricity. That sounds modest — until you consider that this is the same bracket as entire nations, and that the number is growing at roughly 26% per year driven primarily by AI workloads.

Training a large model like GPT-4 is estimated to consume around 50 GWh — enough to power approximately 5,000 US homes for a full year. That’s a one-time cost, and it’s significant. But training is not where the real consumption happens.

Inference — the process of actually running a model to answer a question — individually consumes far less energy than training. But inference runs continuously, at scale, around the clock. Every query to ChatGPT, every Copilot suggestion, every AI-assisted search result draws from data centre power. When you aggregate that across hundreds of millions of users and billions of daily requests, inference dwarfs training in total energy consumed.

Water is a less-discussed dimension of the same problem. Data centres require enormous quantities of water for cooling. Microsoft’s data centres used 1.7 billion gallons of water in 2023 — a 34% increase year-on-year. As AI demand has accelerated, so has the water footprint, and that pressure lands hardest in regions already facing water stress.

What the Big Players Are Saying (vs What They’re Doing)

Every major AI company has a sustainability commitment. Microsoft has pledged to be carbon negative by 2030. Google has a net-zero target. Amazon and Meta have various renewable energy and emissions reduction commitments. These are the pledges. Here’s the reality.

Microsoft’s absolute carbon emissions grew by 30% between 2020 and 2023 — despite those commitments. Google missed its 2020 carbon-neutral goal and has pushed its net-zero timeline to 2030, with the company itself acknowledging it is “on track to fall short.” Amazon’s data centre energy demand grew faster than its renewable energy procurement in recent years.

I want to be clear about what’s actually happening here: it’s not that these companies are being dishonest in their sustainability reports. It’s that AI demand has exploded faster than anyone — including the companies themselves — anticipated. The gap between pledge and reality isn’t primarily about bad faith; it’s about a technological adoption curve that has outpaced the energy transition.

That said, the industry has a credibility problem. Carbon offsets — buying credits rather than actually reducing emissions — have been a significant part of how companies have maintained their “on track” narratives. Offsets are not the same as not emitting. The industry knows this. They continue to use offset-heavy accounting because actual emission reductions are structurally hard given the pace of AI deployment.

The AI Workload Spike Since ChatGPT

The inflection point is November 2022. Before ChatGPT launched, data centre energy growth was significant but relatively predictable. The hyperscalers were building at pace, but the curve was linear. After ChatGPT, everything changed.

Nvidia’s data centre revenue tells the story bluntly. In 2022, Nvidia generated approximately $15 billion from data centre sales. By 2025, that figure had surpassed $115 billion. Every dollar of that revenue represents GPU capacity being deployed into data centres that need power, cooling, and physical space. The compounding is extraordinary.

The clearest symbol of where this is heading: Microsoft restarted the Three Mile Island nuclear plant in Pennsylvania specifically to power its AI data centres. This is not a hypothetical future scenario. The plant, which had been offline since 2019, returned to service under a 20-year power purchase agreement with Microsoft. A nuclear power station restarted to run AI workloads. That’s the scale we’re talking about.

Nuclear, Renewables and the Energy Transition

Here’s where my view gets more nuanced, because the story isn’t purely negative.

The AI industry’s energy demand is creating genuine investment pressure that is accelerating parts of the clean energy transition. Microsoft’s Three Mile Island deal is the most dramatic example. Google has made significant investments in advanced nuclear technologies, including commitments to fund small modular reactor development. Amazon has co-located data centres with solar farms and signed some of the largest renewable energy purchase agreements in history.

Some of this is greenwashing — companies managing their image while the absolute numbers worsen. But some of it is genuinely creating new economic models for clean energy that might not otherwise have attracted sufficient capital. AI’s voracious energy appetite is, perversely, one of the forces that might make the economics of nuclear and large-scale renewables work at scale.

My honest assessment: AI’s energy use is a real and growing problem. The industry’s investment in solutions is real but clearly lagging the demand curve. The next five years will determine whether the clean energy investment triggered by AI demand catches up, or whether we lock in a decade of fossil-fuel-dependent AI scaling.

What the Industry Should Do (and What You Can Do)

The industry has some clear obligations here. First, transparent energy reporting — actual consumption figures, not offset-adjusted numbers. Second, actual (not offset) renewable procurement, meaning physically co-located or directly contracted clean energy rather than tradable certificates. Third, efficiency standards — there is significant variation in how efficiently different models and hardware configurations use energy, and standardising around efficiency would have a meaningful impact.

For developers specifically — and this is the bit that often gets missed — the choices you make daily have a real if small cumulative impact. Use efficient models when capability allows. Claude Haiku for simple classification tasks rather than the full Opus model. GPT-4o mini rather than GPT-4o for routine text processing. The capability gap for simple tasks is negligible; the energy difference is not. Prefer API providers who publish verified renewable energy metrics. And when building AI-heavy applications, factor model size into your architecture decisions rather than defaulting to the largest available.

None of this absolves the industry of its structural responsibilities. Individual developer choices are a rounding error compared to hyperscaler infrastructure decisions. But they’re not zero, and the habit of reaching for the most powerful model by default is worth examining.

Frequently Asked Questions

How much energy does AI use?

AI workloads run via data centres that collectively consume approximately 1–2% of global electricity, with that share growing at around 26% per year driven by AI demand. A single large model training run — like GPT-4 — can use 50+ GWh, equivalent to powering 5,000 homes for a year. But inference (running models continuously at scale) accounts for the largest share of cumulative energy consumption because it runs 24/7 across billions of queries.

Is AI bad for the environment?

AI has a significant and growing environmental footprint. Energy use is rising faster than renewable capacity is being added. Water consumption for cooling is a parallel concern — Microsoft’s data centres alone used 1.7 billion gallons in 2023, up 34% year-on-year. The industry has sustainability pledges, but absolute emissions are rising and execution is clearly lagging the pace of AI deployment. That said, AI is also driving real investment in clean energy that might not otherwise have happened at this scale or speed.

Which AI companies are most sustainable?

All major AI companies — Microsoft, Google, Amazon, Meta — have sustainability commitments, and all are falling behind as AI demand scales faster than clean energy procurement. Microsoft and Google are investing most heavily in clean energy including nuclear. Google has committed to net zero by 2030 and Microsoft to carbon negative by the same date. The honest answer is that none of them are currently meeting their own targets, and the gap is widening as AI demand accelerates.

The same AI investment driving energy demand is also reshaping global politics and raising serious questions about power and surveillance. https://www.turbogeek.co.uk/ai-politics-middle-east looks at how AI is being used for surveillance and influence in the Middle East — and what it means for the rest of us.

Leave a Reply